The CXL consortium has had a regular presence at FMS (which rechristened itself from ‘Flash Memory Summit’ to the ‘Future of Memory and Storage’ this year). Back at FMS 2022, the company had announced v3.0 of the CXL specifications. This was followed by CXL 3.1’s introduction at Supercomputing 2023. Having started off as a host to device interconnect standard, it had slowly subsumed other competing standards such as OpenCAPI and Gen-Z. As a result, the specifications started to encompass a wide variety of use-cases by building a protocol on top of the the ubiquitous PCIe expansion bus. The CXL consortium comprises of heavyweights such as AMD and Intel, as well as a large number of startup companies attempting to play in different segments on the device side. At FMS 2024, CXL had a prime position in the booth demos of many vendors.

The migration of server platforms from DDR4 to DDR5, along with the rise of workloads demanding large RAM capacity (but not particularly sensitive to either memory bandwidth or latency), has opened up memory expansion modules as one of the first set of widely available CXL devices. Over the last couple of years, we have had product announcements from Samsung and Micron in this area.

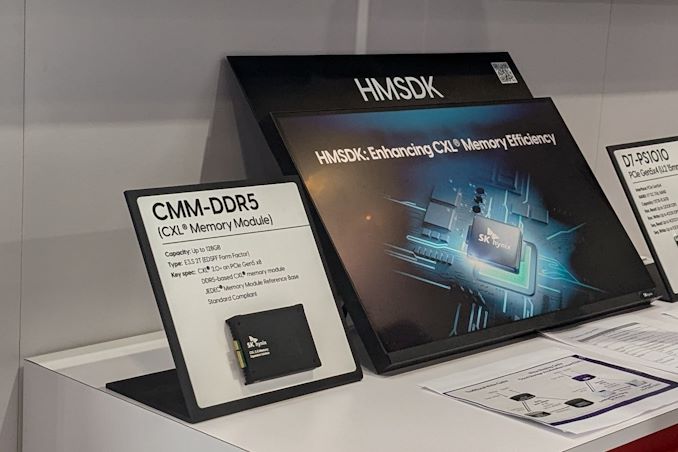

SK hynix CMM-DDR5 CXL Memory Module and HMSDK

At FMS 2024, SK hynix was showing off their DDR5-based CMM-DDR5 CXL memory module with a 128 GB capacity. The company was also detailing their associated Heterogeneous Memory Software Development Kit (HMSDK) – a set of libraries and tools at both the kernel and user levels aimed at increasing the ease of use of CXL memory. This is achieved in part by considering the memory pyramid / hierarchy and relocating the data between the server’s main memory (DRAM) and the CXL device based on usage frequency.

The CMM-DDR5 CXL memory module comes in the SDFF form-factor (E3.S 2T) with a PCIe 3.0 x8 host interface. The internal memory is based on 1α technology DRAM, and the device promises DDR5-class bandwidth and latency within a single NUMA hop. As these memory modules are meant to be used in datacenters and enterprises, the firmware includes features for RAS (reliability, availability, and serviceability) along with secure boot and other management features.

SK hynix was also demonstrating Niagara 2.0 – a hardware solution (currently based on FPGAs) to enable memory pooling and sharing – i.e, connecting multiple CXL memories to allow different hosts (CPUs and GPUs) to optimally share their capacity. The previous version only allowed capacity sharing, but the latest version enables sharing of data also. SK hynix had presented these solutions at the CXL DevCon 2024 earlier this year, but some progress seems to have been made in finalizing the specifications of the CMM-DDR5 at FMS 2024.

Microchip and Micron Demonstrate CZ120 CXL Memory Expansion Module

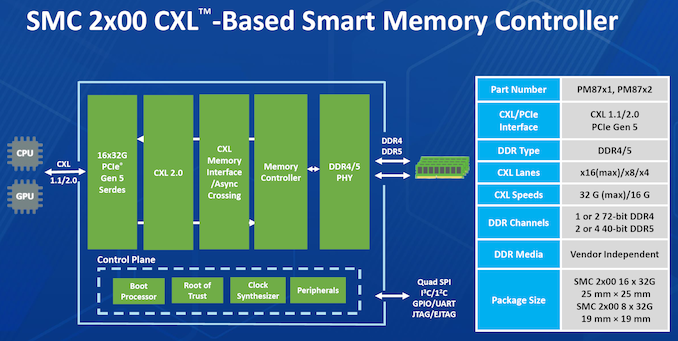

Micron had unveiled the CZ120 CXL Memory Expansion Module last year based on the Microchip SMC 2000 series CXL memory controller. At FMS 2024, Micron and Microchip had a demonstration of the module on a Granite Rapids server.

Additional insights into the SMC 2000 controller were also provided.

The CXL memory controller also incorporates DRAM die failure handling, and Microchip also provides diagnostics and debug tools to analyze failed modules. The memory controller also supports ECC, which forms part of the enterprise class RAS feature set of the SMC 2000 series. Its flexibility ensures that SMC 2000-based CXL memory modules using DDR4 can complement the main DDR5 DRAM in servers that support only the latter.

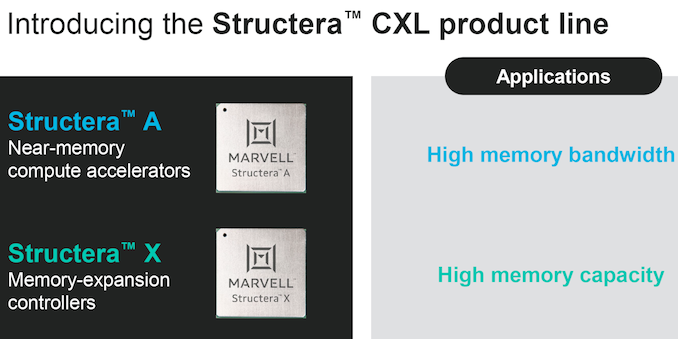

Marvell Announces Structera CXL Product Line

A few days prior to the start of FMS 2024, Marvell had announced a new CXL product line under the Structera tag. At FMS 2024, we had a chance to discuss this new line with Marvell and gather some additional insights.

Unlike other CXL device solutions focusing on memory pooling and expansion, the Structera product line also incorporates a compute accelerator part in addition to a memory-expansion controller. All of these are built on TSMC’s 5nm technology.

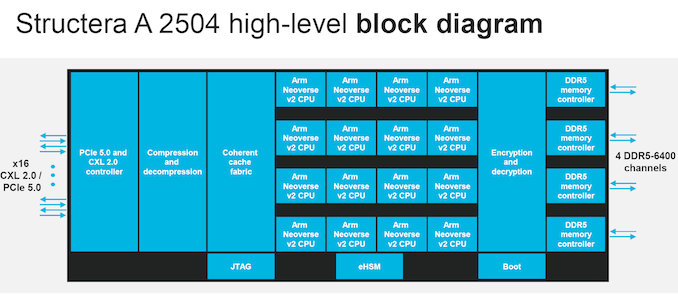

The compute accelerator part, the Structera A 2504 (A for Accelerator) is a PCIe 5.0 x16 CXL 2.0 device with 16 integrated Arm Neoverse V2 (Demeter) cores at 3.2 GHz. It incorporates four DDR5-6400 channels with support for up to two DIMMs per channel along with in-line compression and decompression. The integration of powerful server-class ARM CPU cores means that the CXL memory expansion part scales the memory bandwidth available per core, while also scaling the compute capabilities.

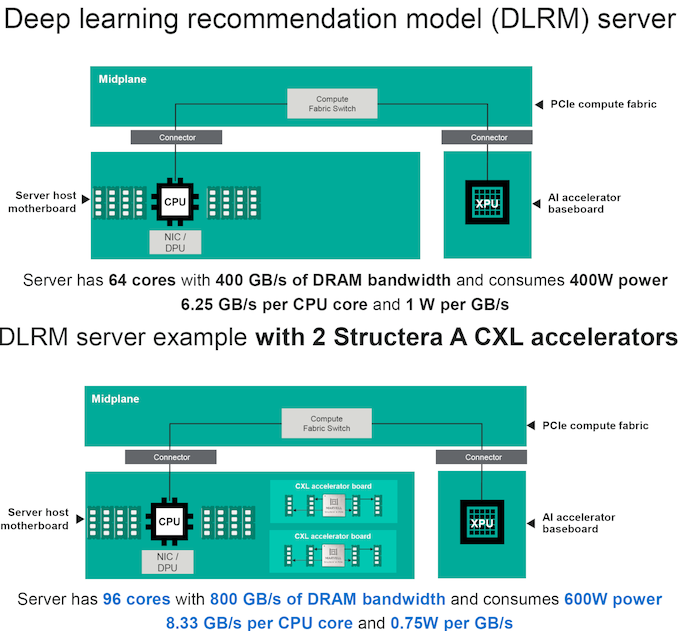

Applications such as Deep-Learning Recommendation Models (DLRM) can benefit from the compute capability available in the CXL device. The scaling in the bandwidth availability is also accompanied by reduced energy consumption for the workload. The approach also contributed towards disaggregation within the server for a better thermal design as a whole.

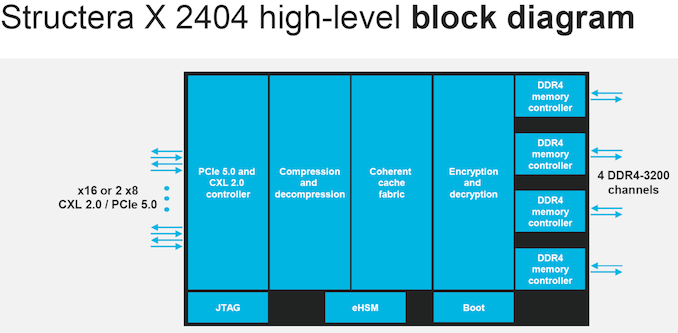

The Structera X 2404 (X for eXpander) will be available either as a PCIe 5.0 (single x16 or two x8) device with four DDR4-3200 channels (up to 3 DIMMs per channel). Features such as in-line (de)compression, encryption / decryption, and secure boot with hardware support are present in the Structera X 2404 as well. Compared to the 100 W TDP of the Structera X 2404, Marvell expects this part to consume around 30 W. The primary purpose of this part is to enable hyperscalers to recycle DDR4 DIMMs (up to 6 TB per expander) while increasing server memory capacity.

Marvell also has a Structera X 2504 part that supports four DDR5-6400 channels (with two DIMMs per channel for up to 4 TB per expander). Other aspects remain the same as that of the DDR4-recycling part.

The company stressed upon some unique aspects of the Structera product line – the inline compression optimizes available DRAM capacity, and the 3 DIMMs per channel support for the DDR4 expander maximizes the amount of DRAM per expander (compared to competing solutions). The 5nm process lowers the power consumption, and the parts support accesses from multiple hosts. The integration of Arm Neoverse V2 cores appears to be a first for a CXL accelerator, and enables delegation of compute tasks to improve overall performance of the system.

While Marvell announced specifications for the Structera parts, it does appear that sampling is at least a few quarters away. One of the interesting aspects about Marvell’s roadmaps / announcements in recent years has been their focus on creating products tuned to the demands of high-volume customers. The Structera product line is no different – hyperscalers are hungry to recycle their DDR4 memory modules and apparently can’t wait to get their hands on the expander parts.

CXL is just starting its slow ramp-up, and the hockey stick segment of the growth curve is definitely definitely not in the near term. However, as more host systems with CXL support start to get deployed, products like the Structera accelerator line start to make sense from a server efficiency viewpoint.